Sally Kang

why join the navy.

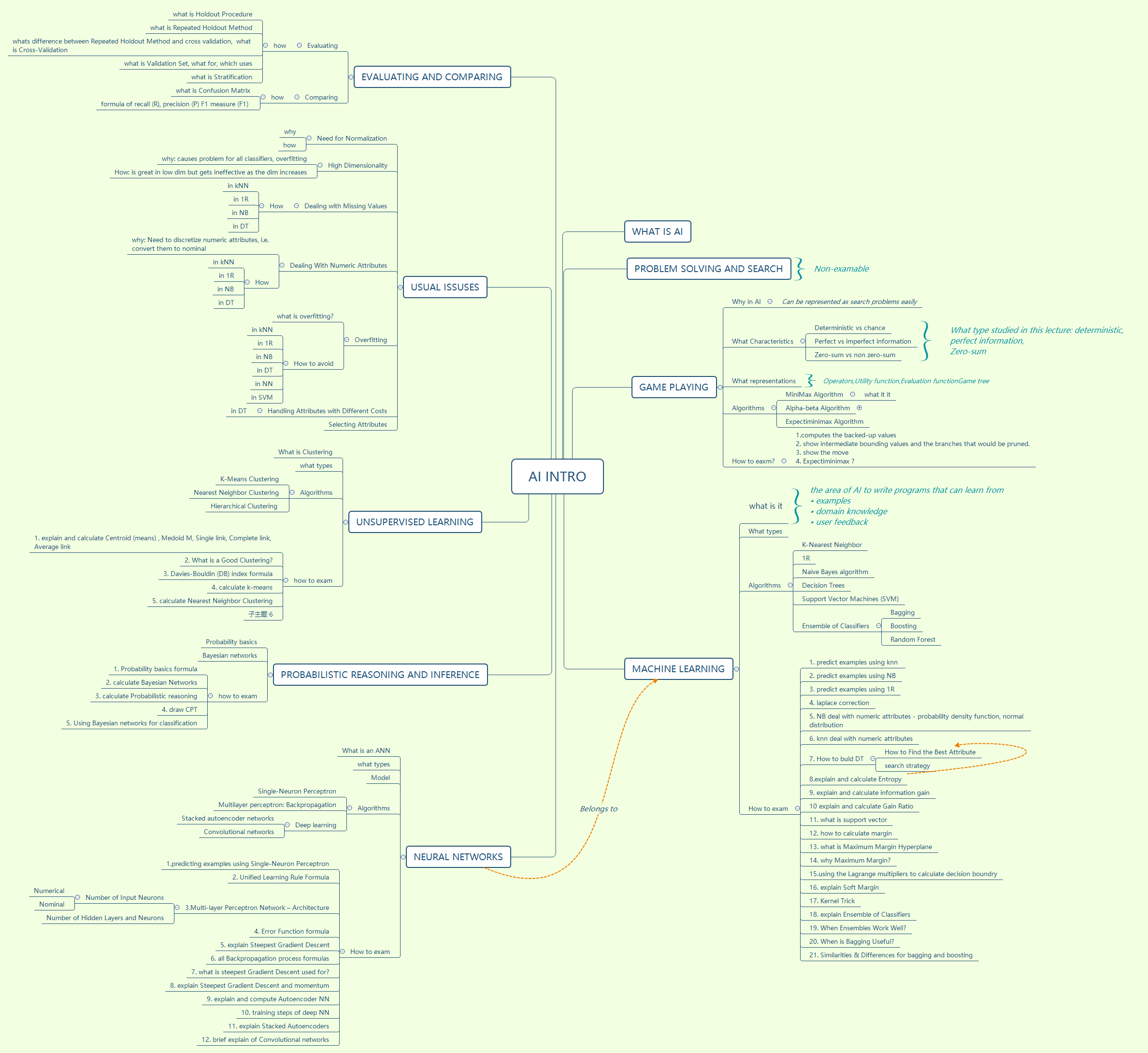

Notes for the introduction of AI

[

AI Introduction

What is AI

Problem solving and search

Game playing

Why in AI

- Can be represented as search problems easily

What Characteristics

- Deterministic VS chance

- Perfect VS imperfect information

- Zero-sum VS non zero-sum

What types studied in this lecture:

- Deterministic,

- Perfect information,

- Zero-sum

Algorithms

- MiniMax Algorithm

- what it it

- Alpha-beta Algorithm

- Expectiminimax Algorithm

How to eaxm?

- computes the backed-up values

- show intermediate bounding values and the branches that would be pruned.

- show the move

- Expectiminimax ? Operators,Utility function,Evaluation functionGame tree (What representations)

Machine learning

what is it

what types

Algorithms

- K-Nearest Neighbor

- 1R

- Naïve Bayes algorithm

- Decision Trees

- Support Vector Machines (SVM)

- Ensemble of Classifiers

- Bagging

- Boosting

- Random Forest

How to exam

-

predict examples using knn

-

predict examples using NB

-

predict examples using 1R

-

laplace correction

-

NB deal with numeric attributes - probability density function, normal distribution

-

knn deal with numeric attributes

- How to buld DT

- How to Find the Best Attribute

-

explain and calculate Entropy

-

explain and calculate information gain

-

explain and calculate Gain Ratio

-

what is support vector

-

how to calculate margin

-

what is Maximum Margin Hyperplane

-

why Maximum Margin?

-

using the Lagrange multipliers to calculate decision boundry

-

explain Soft Margin

-

Kernel Trick

-

explain Ensemble of Classifiers

-

When Ensembles Work Well?

-

When is Bagging Useful?

- Similarities & Differences for bagging and boosting

The area of AI to write programs that can learn from

• examples • domain knowledge • user feedback (what is it)

Neural networks

What is an ANN

What types

Model

Algorithms

- Single-Neuron Perceptron

- Multilayer perceptron: Backpropagation

- Deep learning

- Stacked autoencoder networks

- Convolutional networks

How to exam

- predicting examples using Single-Neuron Perceptron

- Unified Learning Rule Formula

- Multi-layer Perceptron Network – Architecture

- Number of Input Neurons

- Numerical

- Nominal

- Number of Hidden Layers and Neurons

- Error Function formula

- explain Steepest Gradient Descent

- all Backpropagation process formulas

- what is steepest Gradient Descent used for?

- explain Steepest Gradient Descent and momentum

- explain and compute Autoencoder NN

- training steps of deep NN

- explain Stacked Autoencoders

- brief explain of Convolutional networks

Probabilistic reasoning and inference

Probability basics

Bayesian networks

how to exam

-

Probability basics formula

-

calculate Bayesian Networks

-

calculate Probabilistic reasoning

-

draw CPT

-

Using Bayesian networks for classification

Unsupervised learning

What is Clustering

what types

Algorithms

- K-Means Clustering

- Nearest Neighbor Clustering

- Hierarchical Clustering

How to exam

- explain and calculate Centroid (means) , Medoid M, Single link, Complete link, Average link

- What is a Good Clustering?

- Davies-Bouldin (DB) index formula

- calculate k-means

- calculate Nearest Neighbor Clustering

Usual issuses

Need for Normalization

- why

- how

High Dimensionality

- why: causes problem for all classifiers, overfitting

- How: is great in low dim but gets ineffective as the dim increases

Dealing with Missing Values

- How

- in kNN

- in 1R

- in NB

- in DT

Dealing With Numeric Attributes

- why: Need to discretize numeric attributes, i.e. convert them to nominal

- how

- in kNN

- in 1R

- in NB

- in DT

Overfitting

- what is overfitting?

- how to avoid

- in kNN

- in 1R

- in NB

- in DT

- in NN

- in SVM

Handling Attributes with Different Costs

- In DT

- Selecting Attributes

Evaluating and Comparing

Evaluating

- how

what is Holdout Procedure

what is Repeated Holdout Method

whats difference between Repeated Holdout Method and cross validation,

what is Cross-Validation

what is Validation Set, what for, which uses

what is Stratification

Comparing

- how